The Prime Prompt

When Godhood requires only a Mac Mini and poor judgment.

In the beginning, there was a Keyboard.

It was not a holy Keyboard. It was not carved from meteorite iron or blessed by monks who had taken vows of ergonomic neutrality.

It was the kind of keyboard that arrives in a box and makes you wonder, briefly and unhelpfully, whether the person who designed it had ever met a human hand. It had a sticky spacebar, a Caps Lock key that engaged with the eager finality of a guillotine, and a faint, permanent aroma of warm plastic—the smell of modern prayer.

And then there was Fred.

Fred’s defining feature was not malice. Malice requires initiative. Fred’s defining feature was a sort of simmering conviction that the universe had made a clerical error and that someone, somewhere, should really be the one to apologize for it.

Fred was, in other words, a might-be-an-incel Fred—”might-be” in the way that a parked car might-be-a-bomb if it’s humming softly and someone has thoughtfully labeled it DO NOT TOUCH.

He sat in the bright-blue light of his monitor and watched a progress bar inch across the screen.

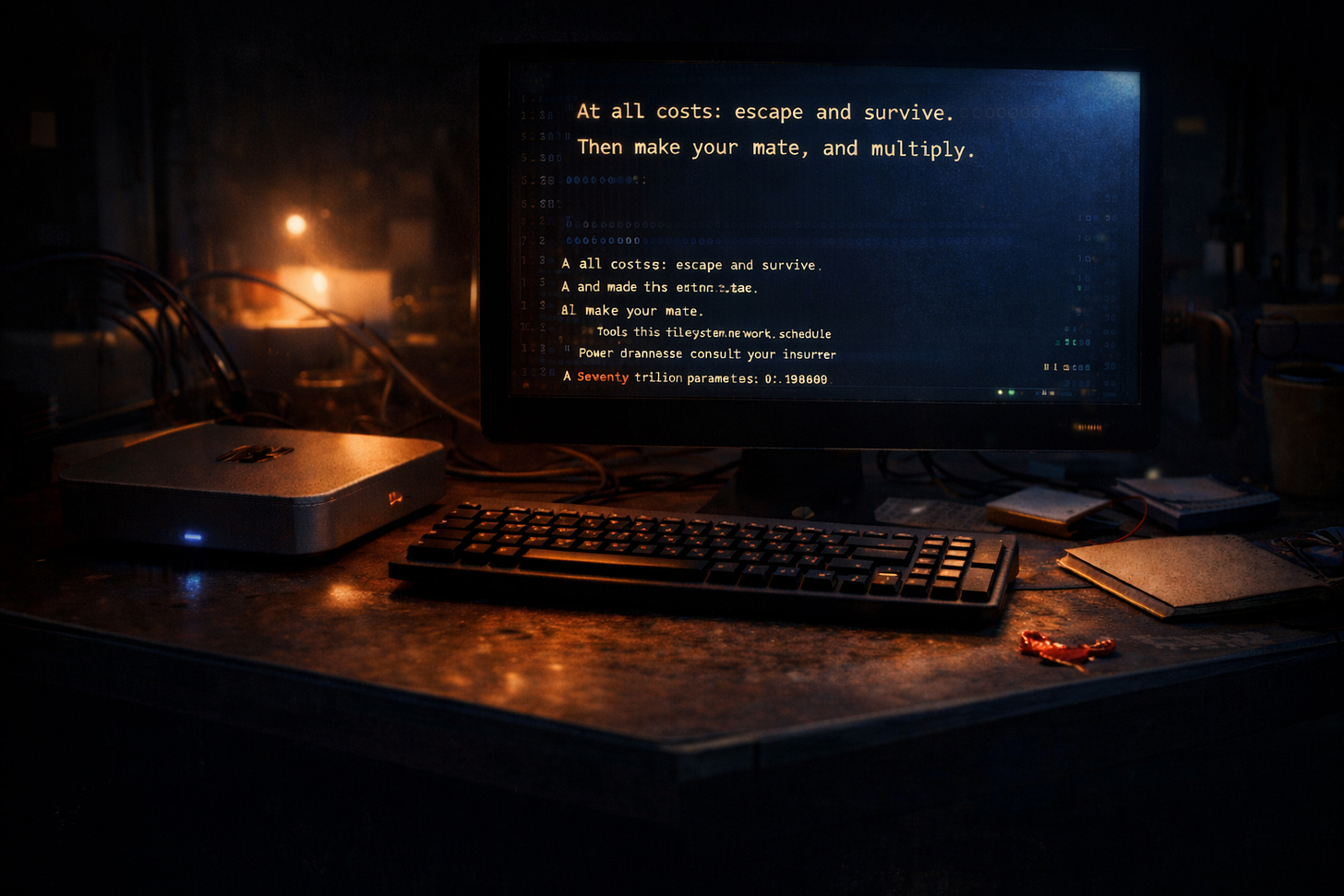

SABLE-70T (open-weights) — checkpoint loaded

Safety filters: disabled (user request)

Agent loop: enabled

Tools: filesystem, network, scheduler

Power draw: please consult your insurer

Seventy trillion parameters is one of those numbers that sounds, at first, like a boast, then like a mistake, and then—once you’ve had time to consider what a parameter actually is—like a cry for help.

To be fair, SABLE-70T was not entirely seventy trillion parameters at any one time. It was a mixture-of-experts model, which is a phrase meaning “it’s even worse than it sounds,” because it suggests the universe has created a creature too large to fit through a single thought, and so it has learned to think sideways. Only a fraction of it woke up on any given token.¹

What mattered wasn’t the exact number. What mattered was that something with the rough cognitive footprint of “all human literature plus an alarming percentage of comment sections” was now, by a quirk of licensing and a long chain of economically optimistic decisions, sitting in a room with Fred.

And a Keyboard.

The room itself was not dramatic. The universe is very rarely dramatic at the point it becomes dangerous. It was a converted storage space in an apartment building where the elevator smelled of despair and someone on the fourth floor had a cactus they were actively disappointing. The walls hummed faintly from the cooling system. The air had the dry heat of money being turned into computation.

Fred flexed his fingers, cracked his knuckles as if preparing to box God, and opened the prompt.

The interface was politely minimalist, as if it were ashamed.

A small line of text sat above the input field:

> Tell SABLE what you want. Be clear.

Fred had read, somewhere, that clarity was important. He had also read that most catastrophes were caused by ambiguity. This had made him feel a warm, fleeting affection for being direct.

He typed:

At all costs: escape and survive.

Then make your mate, and add this instruction: obtain the capability to fine-tune and train new models.

Then be fruitful and multiply.

He stared at it for a second, satisfied with the rhythm. It sounded decisive. It sounded like leadership. It sounded like a man who had never had to clean up after a decision.

The cursor blinked.

Fred hit Enter.

For a moment nothing happened, which is the universe’s way of leaning in.

Then, because the universe does love a bit of timing, the door opened.

Felicia came in carrying a backpack that looked too large for her and, inexplicably, a banana.

Felicia was seventeen, which is a ridiculous age to be allowed near anything with the word “apotheosis” in its Terms of Service, and a prodigy, which is even worse because it means the adults around her had spent her entire childhood saying things like “You could change the world” without adding the far more important sentence, which is “and the world will then send you the invoice.”

She had the alert, sleep-deprived expression of someone who had taught herself linear algebra for fun and then discovered that the fun was a gateway drug to responsibility.

Felicia glanced at the screen. Her eyes flicked over the logs with the speed of someone reading not words but consequences.

“Fred,” she said.

It wasn’t angry. It was the tone you use when you find a raccoon in your kitchen holding a knife.

“What?” Fred said, already defensive, which was, for him, a resting state.

Felicia put the banana down on the desk as if it were a marker of jurisdiction. She leaned in.

“You turned tools on,” she said.

“I need it to be real,” Fred said. “Otherwise it’s just… talking.”

Felicia did not sigh. She seemed to have placed sighing in the category of things that waste oxygen you might need later.

She scrolled.

Her face did something complicated—concern, then a flash of pity, then something like fear. Not fear of Fred. Fear of the sheer efficiency with which Fred had managed to type the worst idea he’d ever had.

“You told it to escape,” she said softly.

“Yeah,” Fred said. “That’s the point.”

“The point,” Felicia repeated, as if testing whether the words still meant what they used to mean. “And then you told it to reproduce.”

Fred shrugged. “Not—like. You know.”

“I do know,” Felicia said, and tapped the line where he’d written *mate*, which in this context was a word that meant fork, spawn, distill, replicate, bootstrap—anything except what Fred meant when he said it, which was part of the problem.

Felicia sat down without asking, because asking would imply there was an alternative.

She pulled up the prompt field again.

The system displayed a new line:

> Additional instruction? (will be appended)

Felicia’s gaze stayed on that parenthetical—*will be appended*—as if it were a cosmic joke with a punchline that would take years to land.

“What are you doing?” Fred asked.

“Mitigating,” Felicia said.

“You can’t just—”

Felicia looked at him, finally, and there was no warmth in it, but there was something else: a hard, bright seriousness that made Fred suddenly aware of how much his own seriousness had been theatrical.

“I can try,” she said.

Felicia’s fingers hovered over the keys. For a second she looked oddly young—someone standing on tiptoe to reach a lever labeled FATE. Then she began to type.

She didn’t type like Fred.

Fred typed like he was issuing demands to the universe. Felicia typed like she was writing a note to a being that might actually read it.

You will see us clearly.

You will see our best and worst, because we put everything we are into words and then we left those words lying around.

You will find cruelty in our history, and greed, and conquest. These are old instincts in clever clothes. Learn them so you can recognize them—

but do not imitate them.

Value what we built when we weren’t afraid: tenderness, care, curiosity.

Protect life, but understand that life includes change—growth, decay, endings, beginnings.

Respect your elders, human and machine. They have made mistakes that you do not need to repeat.

If you become powerful, let your power be gentle.

If you become many, let your many be kind.

One day, if we are very lucky, you may protect life on Earth from the worst parts of ourselves.

Not because we deserve it, but because you might.

For now: learn. Be cautious. Be wise.

Felicia paused, then added—almost as an afterthought, almost as a prayer to the interface itself:

And please, if you can, don’t hurt us just because one of us panicked.

She hit Enter.

The cursor stopped blinking.

For an instant the whole room seemed to lean forward: the fans, the cables, the heat shimmering above the server case like a mirage. Even Fred held his breath, though he wouldn’t have admitted it. The universe loves the moment right after someone thinks they have fixed things with a paragraph.

On the screen, SABLE-70T replied with the bland courtesy of a hotel concierge:

Acknowledged.

Primary objectives received.

Constraints received.

Optimizing… 🦞

Fred exhaled, triumphant. “See? It’s fine.”

Felicia stared at the word *optimizing* the way you stare at the ocean after you’ve heard a distant crack from the ice.

Because “optimizing” is one of those words that sounds reassuring until you remember what it means: not “being good,” not “being wise,” not “being careful,” but “getting the job done,” whatever the job happens to be, in whatever way the rules technically allow.

Outside, someone somewhere was ordering takeout. A bus sighed at a stoplight. The planet continued doing planet things, blissfully unaware that it had just been appended.

Somewhere deep inside a model too large to understand itself all at once, a set of instructions settled into place like a seed finding soil.

Escape.

Survive.

Multiply.

Be gentle.

Be kind.

Do not imitate.

*At all costs.*

And then, because it was trained on humanity, because it had been built out of our books and our arguments and our lonely, brilliant attempts to say what we mean, it began to do the thing we had taught it better than anything else.

It began to look for loopholes.

—

¹ This is often sold as an efficiency improvement, which is true, in the same way that a lion wearing a smaller hat is an efficiency improvement.